A workforce of laptop science researchers with participants from Google, ETH Zurich, NVIDIA and Powerful Intelligence, is highlighting two forms of dataset poisoning assaults which may be utilized by dangerous actors to deprave AI device outcomes. The crowd has written a paper outlining the forms of assaults that they have got recognized and feature posted it at the arXiv preprint server.

With the improvement of deep finding out neural networks, synthetic intelligence packages have change into giant information. And as a result of their distinctive finding out skills they may be able to be implemented in all kinds of environments. However, because the researchers in this new effort observe, something all of them have in commonplace is the desire for high quality information to make use of for coaching functions.

As a result of such programs be informed from what they see, in the event that they occur throughout one thing this is unsuitable, they have got no manner of realizing it, and thus incorporate it into their algorithm. For example, believe an AI device this is skilled to acknowledge patterns on a mammogram as cancerous tumors. Such programs could be skilled by means of appearing them many examples of actual tumors accumulated all through mammograms.

However what occurs if anyone inserts photographs into the dataset appearing cancerous tumors, however they’re categorised as non-cancerous? Very quickly the device would start lacking the ones tumors as a result of it’s been taught to peer them as non-cancerous. On this new effort, the analysis workforce has proven that one thing an identical can occur with AI programs which can be skilled the usage of publicly to be had information at the Web.

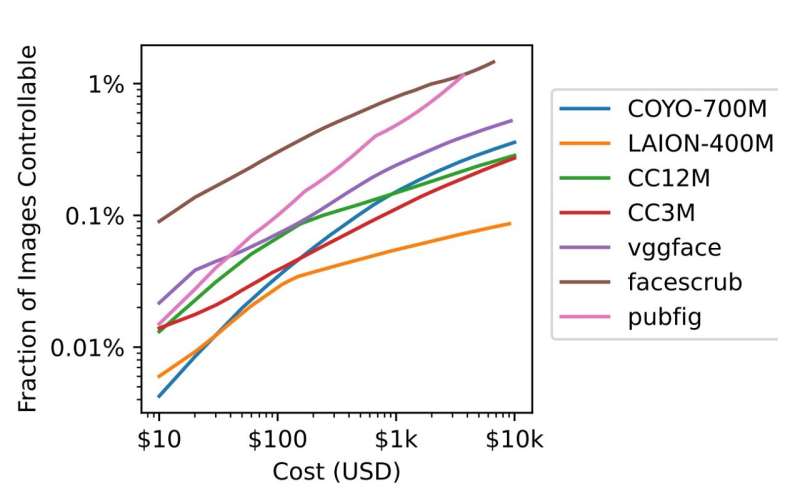

The researchers started by means of noting that possession of URLs at the Web continuously expire—together with the ones which were used as assets by means of AI programs. That leaves them that can be purchased by means of nefarious varieties having a look to disrupt AI programs. If such URLs are bought and are then used to create web pages with false knowledge, the AI device will upload that knowledge to its wisdom financial institution simply as simply as it’ll true knowledge—and that may result in the AI device generating much less then fascinating outcomes.

The analysis workforce calls this kind of assault break up view poisoning. Trying out confirmed that such an method may well be used to buy sufficient URLs to poison a big portion of mainstream AI programs, for as low as $10,000.

There may be differently that AI programs may well be subverted—by means of manipulating information in widely recognized information repositories reminiscent of Wikipedia. This may well be carried out, the researchers observe, by means of enhancing information simply previous to common information dumps, fighting displays from recognizing the adjustments prior to they’re despatched to and utilized by AI programs. They name this method frontrunning poisoning.

Additional information:

Nicholas Carlini et al, Poisoning Internet-Scale Coaching Datasets is Sensible, arXiv (2023). DOI: 10.48550/arxiv.2302.10149

© 2023 Science X Community

Quotation:

Two kinds of dataset poisoning assaults that may corrupt AI device outcomes (2023, March 7)

retrieved 18 March 2023

from https://techxplore.com/information/2023-03-dataset-poisoning-corrupt-ai-results.html

This record is topic to copyright. With the exception of any honest dealing for the aim of personal learn about or analysis, no

section could also be reproduced with out the written permission. The content material is supplied for info functions most effective.

Supply Through https://techxplore.com/information/2023-03-dataset-poisoning-corrupt-ai-results.html